Nishant Kumar

·

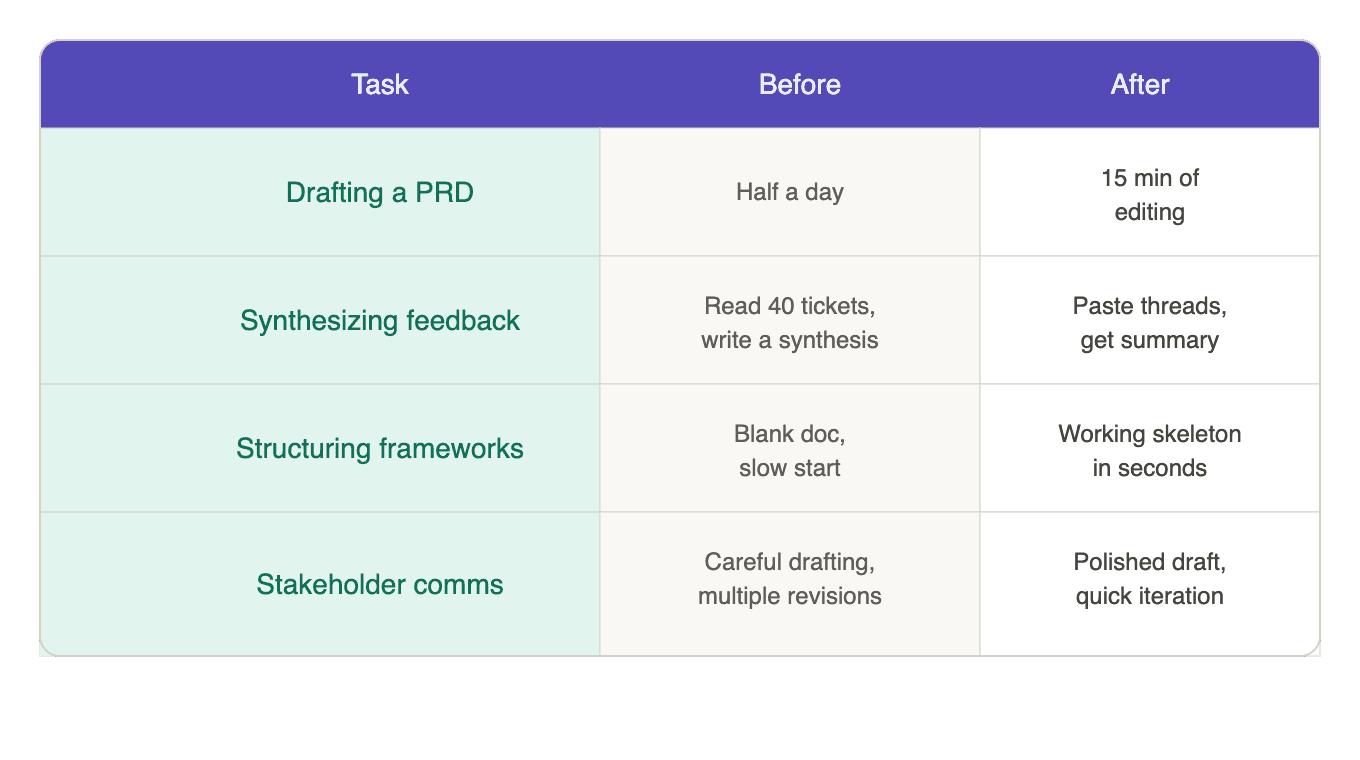

Copilots made PMs faster at writing things up. That's not in dispute. A PRD that used to take half a day now takes fifteen minutes of editing. Synthesizing support tickets, structuring stakeholder updates, and turning messy brainstorm notes into something actionable – all faster. Genuinely, meaningfully faster.

But there's a question that's harder to answer, and most PMs who've used these tools for a few months seem to be circling it: are the decisions any better? Not the documents. Not the throughput. The actual calls: what to build, what to cut, which signal to trust when two signals point in different directions.

The honest answer, for most people, seems to be no. And maybe that shouldn't be surprising. Copilots were designed to act on demand; you ask, they produce. That's what they do, and they do it well. But somewhere along the way, faster outputs were mistaken for better decisions. Those are different things.

What copilots actually solved

Before, a PM staring at an empty document had to generate structure and content at the same time. That's hard. Now the structure comes almost free. You dump your notes, get a skeleton, and edit from there. That's a real improvement, and not just in time saved. There was a real cost to the blank-page problem; it created friction that made people put off writing specs, delay stakeholder updates, and avoid the synthesis work that's actually important. Copilots removed that friction. That matters.

But the operating model around those tasks didn't change at all. The PM still stitches together signals from Slack, pulls metrics from the analytics dashboard, and cross-references what sales said on last week's call with what the support data actually shows. This leaves almost no time for the actual decisions. The copilot helps write the output once you've decided. It doesn't help you see the pattern that improves the decision.

Where the ceiling shows up

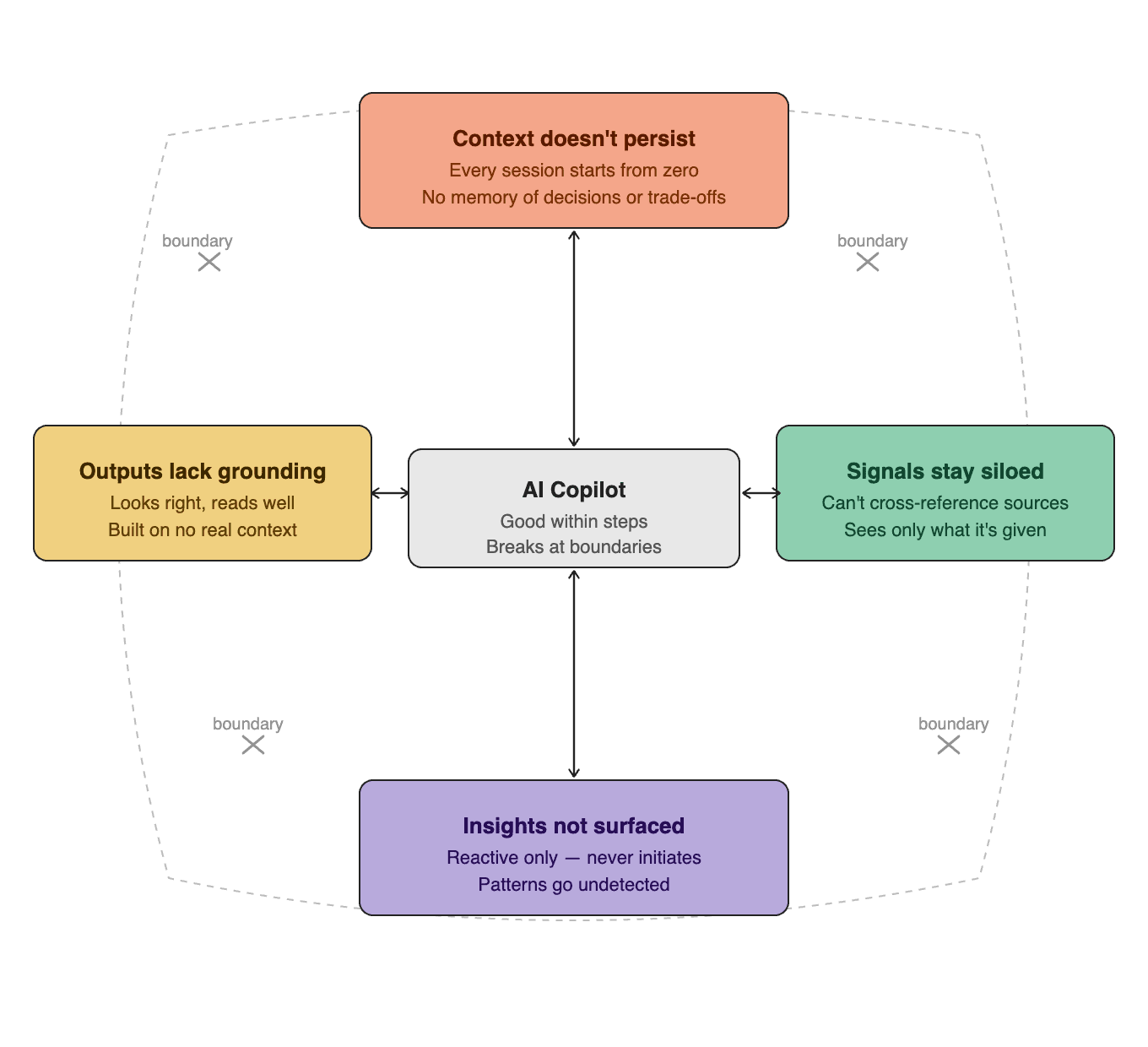

These tools all hit the same wall, and it shows up in four places worth naming because they're where PM work actually happens.

Every conversation starts from zero.

The copilot doesn't know what got deprioritized last quarter or why. It doesn't know which customer segment is driving churn. All of that has to be carried into every prompt, manually, every time. Some PMs work around this by maintaining context files that need to be pasted into each session. But product context isn’t really a document. It’s a web of relationships between decisions, constraints, people, and timing. Flattening that into a text block loses exactly the parts that matter most.

The tool isolation.

Product work lives across support queues, analytics, CRM, Slack, call recordings, design files, and the roadmap. The copilot only sees what it's given in that moment. It can't pull the support ticket that contradicts the sales feedback. It can't cross-reference product analytics with customer complaints. The time-consuming work of collecting and connecting signals across sources still sits entirely with the PM.

Reactive by design.

Copilots execute when prompted but never initiate. They don't surface patterns like "three enterprise customers reported the same friction point this week, and it maps to the feature that got moved out of scope last month." Connecting the dots still sits with the PM.

The trust problem.

A copilot generates confident, well-structured artifacts in thirty seconds. But it has no basis for knowing whether the artifact is actually right. It doesn't know the CEO cares deeply about one specific account. It doesn't know the team just lost a senior engineer. It doesn't know that a competitor shipped a similar feature last week, and the market context just shifted.

Copilots add a step to the loop

The tools that were supposed to free up time for judgment are actually adding to the cognitive load around it. The PM still gathers the signals, connects the dots, and finds the patterns. That work is unchanged. But now, on top of that, they also feed context into the copilot, get a polished artifact back, and evaluate whether that artifact is actually right. The copilot produces more outputs, each still requiring the PM's full judgment to evaluate. Judgment that still depends on context, which required manual effort to gather in the first place.

The workload changed shape. It didn't get smaller.

The natural response is that the next model will fix this. GPT-5, Claude 5, smarter reasoning, larger context windows, better outputs. And maybe the outputs will get better. But look at what's actually causing the problem. It's not intelligence. It's how the system is designed.

A model that's ten times smarter but still starts every conversation blank, still only sees what you paste in, and still waits to be asked, that model hits the same ceiling.

What can break through

We at FerriX think the breakthrough will come from systems that don't wait to be asked. Systems that take ownership of a goal and work across the product cycle to deliver it.

What that looks like in practice: the system discovers signals on its own — patterns in support tickets, friction points in product data, sales feedback that traces to the same root cause — and connects them before you've started your Monday triage. It surfaces what matters, estimates what's at risk, and drafts a plan.

You open your morning summary and review the analysis. You add context from a customer conversation that the system couldn't access. You adjust the priority based on what you know about the engineering team's current load. You approve the plan.

The assembly still happened. All of it. You just weren't the one doing it. You spent fifteen minutes on the judgment that decided the outcome, not five hours gathering the context that makes judgment possible.

The PM's role doesn't disappear in this model. It shifts to the thing that was always supposed to be the job: deciding what matters and why.