Nishant Kumar

·

The bottleneck in product development is no longer shipping speed. It's the upstream judgment.

Something changed around last December and January. The coding agents crossed a threshold. Features that used to take a quarter now ship in weeks. Instead of walking into a stakeholder review with a slide deck, you walk in with a working prototype.

If you’re a product manager, you’ve noticed this change: engineering used to ask, “Can we get more time?” Now it's "what should we build next?" The constraint didn't disappear. It moved upstream to decide what to build.

That's product work. And it's where most teams today are least prepared.

The Two Layers Of Product Work

Some will argue the answer is fewer PMs and more empowered engineers. That's happening at some companies, and in certain contexts, it makes sense. But for most teams, it isn't that simple. They juggle multiple segments, enterprise accounts, and competing priorities from every direction. The real question is: what should you actually be spending your time on?

Here's the uncomfortable truth. Most PM work today isn't thinking. It's assembling the context required to think.

Consider how you spend a typical week. The work breaks down into two distinct layers, and the split between them reveals the problem.

There’s the assembly and execution layer. It gathers signals from support tickets, Slack threads, and analytics dashboards. It also structures plans in documents that few read more than once. Then, it writes specs, files tickets, and tracks whether things ship.

Then there's the judgment and steering layer. When you are evaluating whether the signals actually point where you think they do, setting direction when the data is ambiguous, adjusting priorities when a new constraint surfaces, and adding context that no system has because you were in the room when it was said.

Most PMs spend the majority of their time on assembly. The shift underway is about reclaiming time for judgment.

This isn't a new observation. Every generation of PM tooling has tried to solve it. What's changed is how far the tooling can reach and why each prior wave fell short.

How PM Tooling Evolved

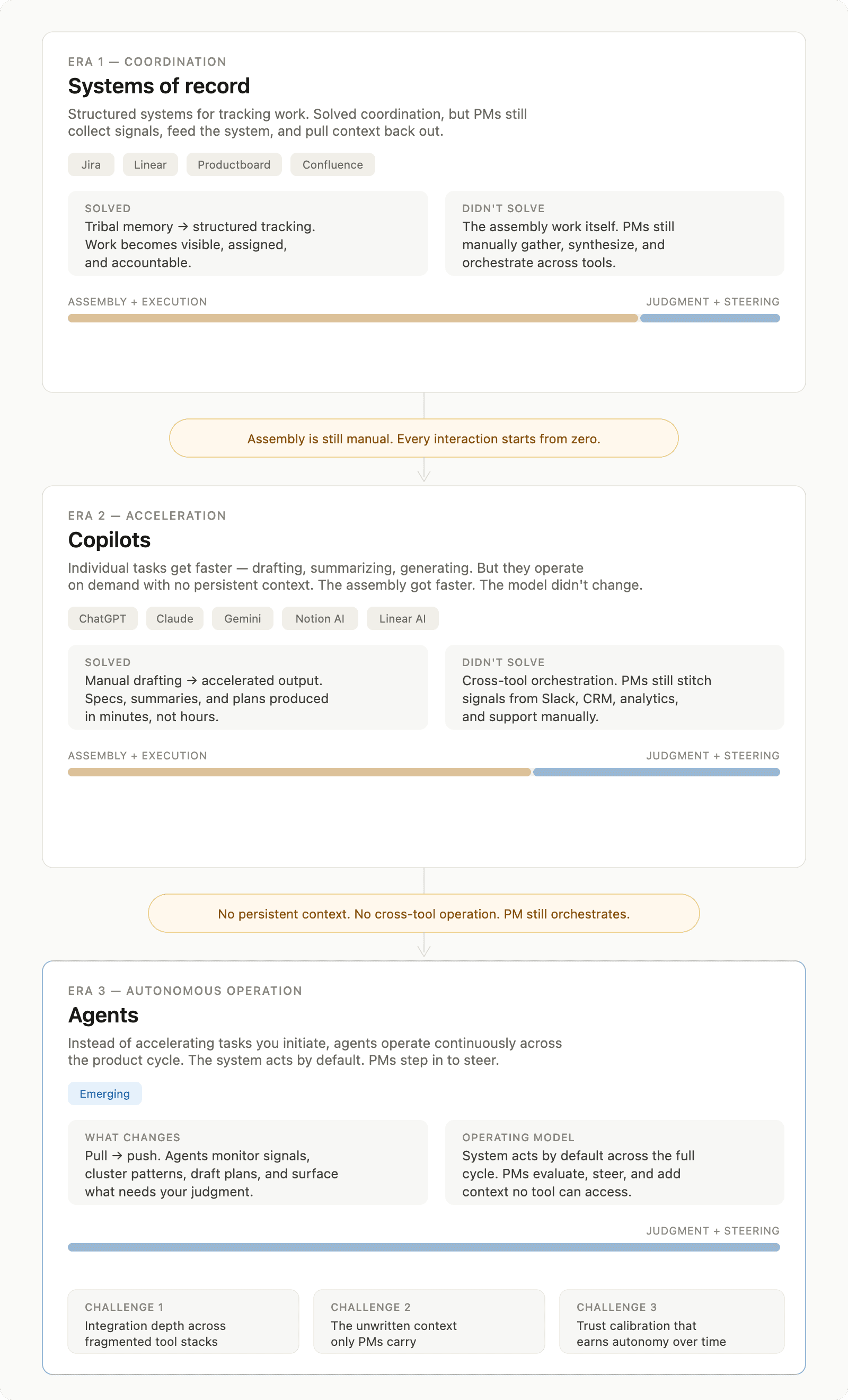

PM tooling has evolved through three stages, each trying to reduce the same assembly overhead. First, systems organized the work. Then the copilots sped up tasks. Now, agents aim to operate the assembly layer itself.

Systems of record

Tools like Jira, Linear, Productboard, and Confluence solve the coordination problem. Before them, product work lived in spreadsheets, email threads, and tribal memory. These platforms introduced structured tickets with owners, roadmaps with timelines, documentation with version history, and workflows with accountability.

What they didn't solve was the assembly work itself. PMs collect signals from five channels. They feed these into the system. Then, they pull context back out when it's time to make a decision. The system of record holds the output of your thinking. It doesn't help you think.

Copilots

The Copilot wave includes ChatGPT, Claude, Gemini, Notion AI, and Linear's AI features. They all speed up individual tasks. Need to draft a PRD? Paste your notes, and get a structured spec in minutes. Summarize a long feedback thread? Done. Generate a prioritization framework from a list of competing requests? Quick.

But copilots operate on demand, with no persistent context. Every interaction starts from zero. You still collect the inputs, choose what to use, and organize the tasks yourself. The assembly got faster. The operating model didn't change. You're still stitching together signals from Slack. You gather data from your analytics tool. Then, you check what the sales team and GTM team shared last week. Finally, you decide which inputs are important. The copilot helps you write the output. It doesn't help you see the pattern.

Agents

Agents operate on a completely different premise. Instead of speeding up tasks you initiate, they take ownership of a goal and work across the product cycle to deliver it. They discover signals, prioritize what matters, draft plans, execute on them, and loop back to check whether the work moved the needle. The PM defines the goal and the guardrails. The agent does the rest until a decision requires your input.

The hard part is making this reliable across messy, real-world product work. But if it works, the operating model changes. Instead of backlog-driven workflows where you manually triage, prioritize, and assign, the system acts by default. You set direction, define what's off the table, and step in when the agent surfaces a call it can't make on its own.

Where Agents Break Down

Agents face two key challenges that determine if they are truly helpful or just flashy in a demo.

The tough part, and let’s be clear, is ensuring agents are reliable in the messy world of real product work.

The multi-tool problem. Product work doesn't live in a single system. During your day, you use several tools. These include your project tracker, Slack, analytics, CRM, support tools, and documentation platforms. An agent that can read your project management tool but can't see customer feedback is just a toy. It can summarize feedback, but if it can't create tickets, it’s not useful. Integration depth across your actual stack matters more than any individual feature. The agent needs to work where your data already lives, not ask you to move it somewhere new.

The context problem. Some of the most important signals in product work never make it into any system. The CEO values the enterprise account, but no one has written down why. You were at the engineering standup when someone said, "It's technically possible, but we’d hate to maintain it." You could hear the concern in the customer's voice. The problem seemed worse than the ticket said.

Agents work with the data inside tools. The unwritten context is yours. It includes the relationship history and your gut read on what a team can actually ship well. Any system that ignores this gap can lead to plans that miss important details. The question isn't whether agents can replace PM judgment. It’s about whether they can do enough assembly work. This way, your judgment targets the right problems at the right time.

Same Problem, Two Eras

The distinction between assembly and judgment becomes clear when you trace a single scenario through both modes of working.

Imagine a reliability issue surfacing across your product. Over the course of a week, several customers reported it through different channels. Support tickets mention it. A sales engineer flags it during an important renewal call. An analytics spike shows increased error rates in a specific workflow.

Today, you notice the support tickets during your weekly triage. The sales feedback lives in the CRM, and you find it two days later when the AE mentions it in Slack. Connecting the dots means: Checking multiple tools, pulling data into a document, estimating impact, writing up the initiative, drafting a spec, and creating tickets for engineering.

Then, you circle back to see if the fix changed the metrics

A week of assembly work before the real question gets answered: how urgent is this, and what should we do about it?

The Agentic System uses agents to identify patterns in support tickets and product signals. This happens even before you start your Monday triage. It groups the reports and links sales feedback to the same root cause. It estimates revenue loss from affected accounts. Then, it creates a remediation plan with recommendations for scope and priority.

You open your morning summary and review the analysis. You add context from a customer conversation that the system couldn't access. Then, you adjust the priority based on what you know about the engineering team's current load. Finally, you approve the plan.

The assembly still happened. You're just not the one doing it. You spent fifteen minutes on judgment that decided the outcome, not the five hours of context-gathering before it.

What This Asks of You

When the assembly layer shrinks, what remains is work that only a human can do. This includes tasks that require organizational context, strategic awareness, and product taste.

Evaluation becomes continuous. Agents will generate signals, plans, and specs across every phase of the product cycle. You shift from making these artifacts to evaluating them. Look for what's missing, what's misweighted, and where the reasoning fails. This is a different muscle from writing a PRD from scratch. You need to see the whole picture and test someone else's logic instead of building your own from scratch.

Steering over specifying. You guide the focus this quarter by setting key direction and constraints. You decide which trade-offs to accept, what outcomes to aim for, and which risks are off-limits. The shift is from author to editor, from driver to navigator. You define the destination and the boundaries. The system figures out the route.

Systems thinking. Agent output in one phase becomes input in the next. A misweighted signal during discovery leads to a flawed initiative brief. A flawed brief produces the wrong spec. A wrong spec creates tickets that solve a problem no one actually has. The PM's job is to spot misalignment early. This means they need to see how each phase links together. They shouldn't just look at each artifact alone.

Trust calibration is a skill. Not everything an agent creates needs a thorough review. Also, not everything can just be accepted. The skill is knowing when to dive deep and when to let the system run. You adjust this skill over time as you learn where the system is reliable and where it needs tighter control. This is the same skill good managers develop with their teams, applied to a different kind of collaborator.

The Shift Underway

Engineering got faster. The constraint moved upstream. The tooling is changing. First, it organized work. Now, it acts as a copilot to speed up tasks. Soon, it will become agents that get the goal accomplished

The category is early. The integration challenges are real, the context gap is unsolved, and trust takes time to build. The core issue remains: most PM time is spent on assembly and execution. Meanwhile, technology that can manage this is advancing quickly.

The role of the PM isn't changing. What surrounds it is. The decisions that matter what to build, for whom, and why now have always been the core of the job. The difference is that PMs who excel in judgment and steering will have more time to do it right. In contrast, PMs who focus on assembly work will find it harder to defend their value.

This is the direction we're building toward at FerriX agents that handle the assembly and execution layer, so PMs can focus on judgment and steering. The category is still forming, but the shift is already underway. The PMs who lean into it won't just keep up. They'll operate at a level that wasn't possible before.